Vision Callbacks¶

Useful callbacks for vision models.

Note

We rely on the community to keep these updated and working. If something doesn’t work, we’d really appreciate a contribution to fix!

Confused Logit¶

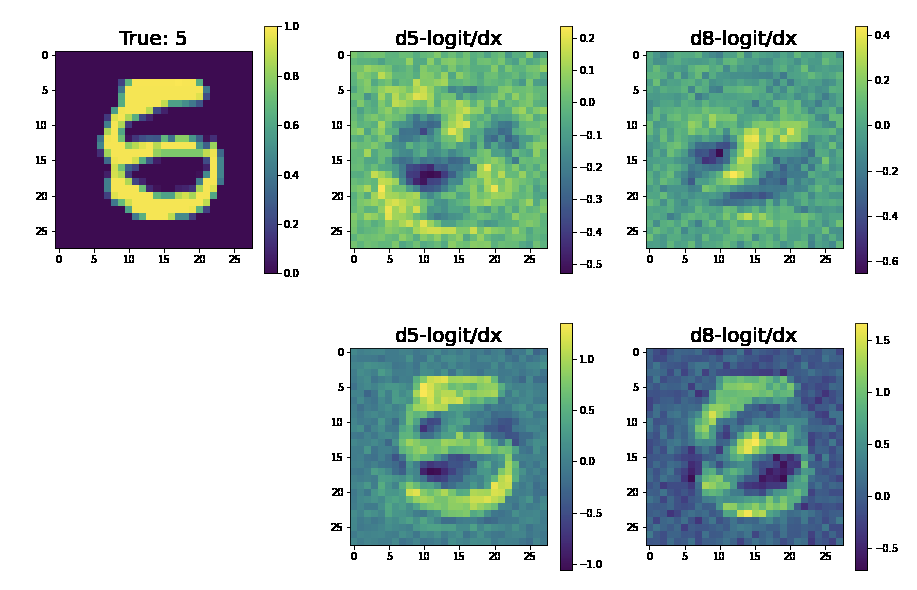

Shows how the input would have to change to move the prediction from one logit to the other

Example outputs:

- class pl_bolts.callbacks.vision.confused_logit.ConfusedLogitCallback(top_k, min_logit_value=5.0, logging_batch_interval=20, max_logit_difference=0.1)[source]

Bases:

pytorch_lightning.callbacks.callback.CallbackWarning

The feature ConfusedLogitCallback is currently marked under review. The compatibility with other Lightning projects is not guaranteed and API may change at any time. The API and functionality may change without warning in future releases. More details: https://lightning-bolts.readthedocs.io/en/latest/stability.html

Takes the logit predictions of a model and when the probabilities of two classes are very close, the model doesn’t have high certainty that it should pick one vs the other class.

This callback shows how the input would have to change to swing the model from one label prediction to the other.

In this case, the network predicts a 5… but gives almost equal probability to an 8. The images show what about the original 5 would have to change to make it more like a 5 or more like an 8.

For each confused logit the confused images are generated by taking the gradient from a logit wrt an input for the top two closest logits.

Example:

from pl_bolts.callbacks.vision import ConfusedLogitCallback trainer = Trainer(callbacks=[ConfusedLogitCallback()])

Note

Whenever called, this model will look for

self.last_batchandself.last_logitsin the LightningModule.Note

This callback supports tensorboard only right now.

Authored by:

Alfredo Canziani

- Parameters

projection_factor¶ – How much to multiply the input image to make it look more like this logit label

min_logit_value¶ (

float) – Only consider logit values above this thresholdlogging_batch_interval¶ (

int) – How frequently to inspect/potentially plot somethingmax_logit_difference¶ (

float) – When the top 2 logits are within this threshold we consider them confused

Tensorboard Image Generator¶

Generates images from a generative model and plots to tensorboard

- class pl_bolts.callbacks.vision.image_generation.TensorboardGenerativeModelImageSampler(num_samples=3, nrow=8, padding=2, normalize=False, norm_range=None, scale_each=False, pad_value=0)[source]

Bases:

pytorch_lightning.callbacks.callback.CallbackWarning

The feature TensorboardGenerativeModelImageSampler is currently marked under review. The compatibility with other Lightning projects is not guaranteed and API may change at any time. The API and functionality may change without warning in future releases. More details: https://lightning-bolts.readthedocs.io/en/latest/stability.html

Generates images and logs to tensorboard. Your model must implement the

forwardfunction for generation.Requirements:

# model must have img_dim arg model.img_dim = (1, 28, 28) # model forward must work for sampling z = torch.rand(batch_size, latent_dim) img_samples = your_model(z)

Example:

from pl_bolts.callbacks import TensorboardGenerativeModelImageSampler trainer = Trainer(callbacks=[TensorboardGenerativeModelImageSampler()])

- Parameters

num_samples¶ (

int) – Number of images displayed in the grid. Default:3.nrow¶ (

int) – Number of images displayed in each row of the grid. The final grid size is(B / nrow, nrow). Default:8.normalize¶ (

bool) – IfTrue, shift the image to the range (0, 1), by the min and max values specified byrange. Default:False.norm_range¶ (

Optional[Tuple[int,int]]) – Tuple (min, max) where min and max are numbers, then these numbers are used to normalize the image. By default, min and max are computed from the tensor.scale_each¶ (

bool) – IfTrue, scale each image in the batch of images separately rather than the (min, max) over all images. Default:False.

- on_train_epoch_end(trainer, pl_module)[source]

Called when the train epoch ends.

To access all batch outputs at the end of the epoch, either:

Implement training_epoch_end in the LightningModule and access outputs via the module OR

Cache data across train batch hooks inside the callback implementation to post-process in this hook.

- Return type